CAVEAT EMPTOR

CAVEAT EMPTOR

Before we get started, we need to warn you that the following material is not for the faint of heart. It involves mass SQL database operations, which, if not done done properly, can HOSE YOUR DATABASE BUT GOOD.

There, we said it. Don’t do this, unless you are a Webservant, setting up a Root Server.

BASIC PREMISE

Existing meeting data can take many forms. It can be complex, highly-related SQL databases, or it could be a piece of paper with white-out used to mask old meetings, and new meetings scrawled on top.

One of the first things that we will do, is consolidate that data into a fairly common form.

We have a demonstration dataset and database in the source code repository, and will be using these for our examples.

These are the sample/example files that we provide for this operation:

Actual Implementation Files:

- bmlt_conversion_tables.php (This is the file that you will edit -this is the default table, set up for a standard NAWS CSV dump).

- bmlt_import.php (This is the main functionality file. Don’t edit this).

Default (NAWS Dump into a Default Root Server) Files:

- EmptyDatabaseExample.sql (This is an example “primed” database for the Show Me Region).

- meetings.csv (This is a sample Comma-Separated Values file that contains a large dump from NAWS for the ShowMe Region. It is in standard NAWS format).

The previous two files can be used to run a default NAWS dump format in a Root Server that is “primed” for a fictional “Show Me Region.” The data is not actually valid for the current Show Me Region, but this will pretend that it is. It will give you an idea of how this works.

The way that you run this, is that you take the 2 files in the previous list (bmlt_conversion_tables.php and bmlt_import.php), and place them in the same directory as your main_server directory and auto-config.inc.php file.

Once this is done, use phpMyAdmin (or a similar tool) to replace the contents of your BMLT directory with the contents of EmptyDatabaseExample.sql.

This will give you a primed directory with several Service bodies and users, but no meetings (all the users have “godadmin” as the password, and the Server Admin is called “godadmin”).

You then place the sample data file (meetings.csv) into the same directory as the bmlt_import.php script, and run the bmlt_import.php script.

This will populate the directory with the example data. After running the script, you will be able to log into the Root Server, and see the meetings that have been added. Some will be “unpublished.” This is deliberate. We modified some of the data to explicitly fail to geocode, and some of the meetings are categorized as institutional (restricted attendance)

You can also tell the script to report progress at 4 different levels of detail: minimal, medium, verbose or prolix. Default is “medium.”

You do that by appending “?log=XXXXX” to the script URL, like so:

http://example.com/root-server/bmlt_import.php?log=minimal

http://example.com/root-server/bmlt_import.php?log=medium (or http://example.com/root-server/bmlt_import.php)

http://example.com/root-server/bmlt_import.php?log=verbose

http://example.com/root-server/bmlt_import.php?log=prolix

Below are links to HTML files that display the expected results of running these scripts.

Sample Report Files:

The following dumps were made via an import of the standard NAWS-format (meetings.csv) dump, using the default bmlt_conversion_tables.php file.

- ExampleImportScriptReport-MINIMAL.html (This is a sample report from the given files in minimal output vebosity mode).

- ExampleImportScriptReport-MEDIUM.html (This is a sample report from the given files in medium -default- output vebosity mode).

- ExampleImportScriptReport-VERBOSE.html (This is a sample report from the given files in verbose output vebosity mode).

- ExampleImportScriptReport-PROLIX.html (This is a sample report from the given files in “prolix” -everything but the kitchen sink- output vebosity mode).

Sample Non-Standard Implementation Data Files:

These files show a different structure from the default ones above.

- simple-import-bmlt_conversion_tables.php (This is a version of the conversion tables file that is “tuned” for the two example files).

- simple-import-meetings.csv (This is a sample Comma-Separated Values file with a non-NAWS format dump with a small subsection of the ShowMe Region).

- simple-import-meetings.tsv (This is a sample Tab-Separated Values file with a non-NAWS format dump with a small subsection of the ShowMe Region).

STEP ONE: CREATE THE DESTINATION DATABASE

Before we start, we need to create the “skeleton” of the destination site. This operation will work on BMLT Root Server databases with existing data, but that’s a bit more complex (not much) than what we’ll cover here. For our examples, we’ll assume that we are creating a new site.

Assuming that you are starting a new site, you should run the install wizard to set up your Root Server.

The next thing that you should do, is create all of the Service bodies that will hold meetings.

After that, make sure that you have done all the format code editing that you want. It will be difficult to change formats after all the meetings have been added.

Before going on, you should record the ID (

in the default table) of each Service body, as you will be using this in the map. Additionally, you should record the format code (The

column in the default table) of each format in the default language of the Root Server (Formats can be assigned different codes for different languages. The importer uses the default Root Server language, so you should use this language for mapping the format codes).

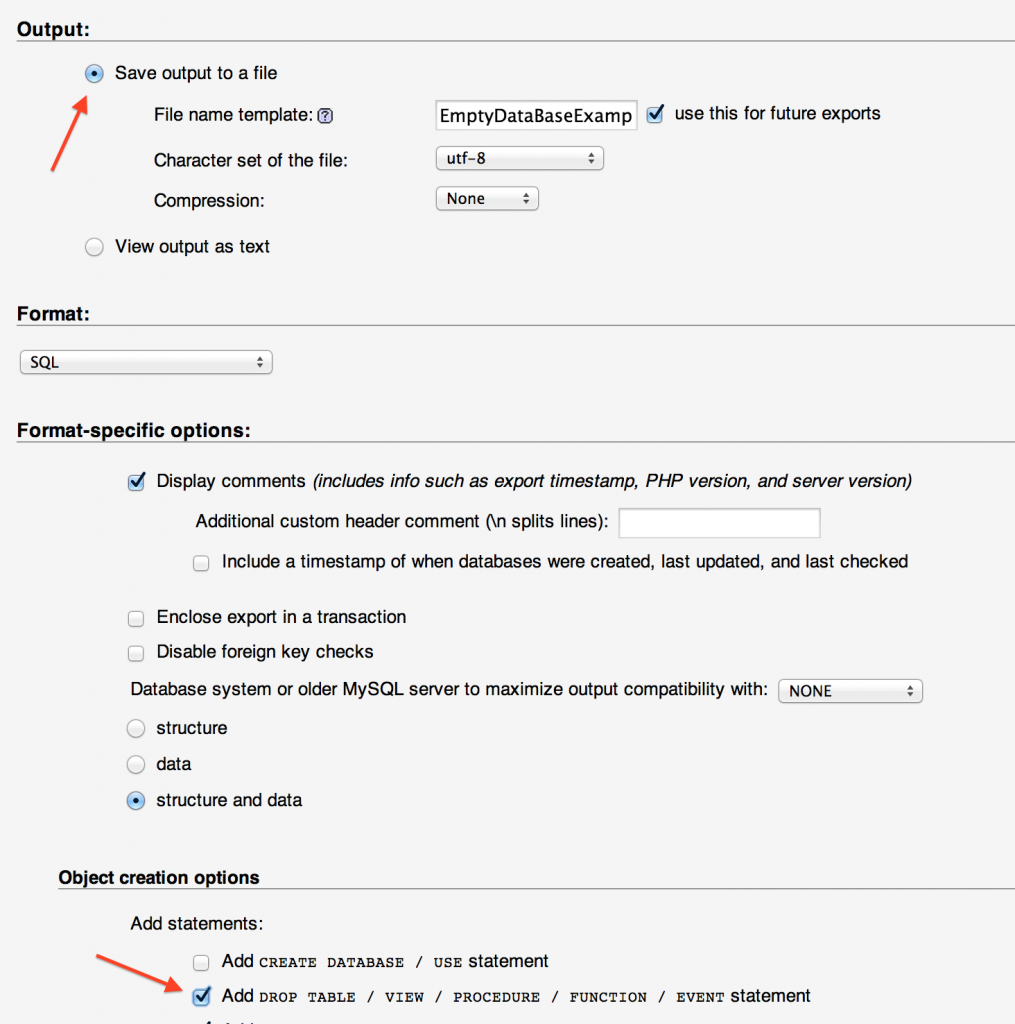

STEP TWO: BACK UP YOUR INITIAL DATABASE

The importance of backups cannot be overstated. You need to have a firm backup strategy for your site. This backup will be an SQL dump of the “primed” database. This will have the Admin account, the formats, users and Service bodies; but no meetings.

We use phpMyAdmin, the most common Web-based database utility (usually provided by hosting companies). You make a backup by selecting the database, then choosing “Export.” Save the database as a file. It’s a very good idea to test it, and make sure that you have it correctly saved before proceeding.

The Export Option

PROTIP: Make sure that you select

. This means that you don’t need to keep deleting all the tables in the database before each restore.

The Save As File and Drop Table Option

Here is our example database backup. If you install this, it will set the stage for the rest of the examples.

STEP THREE: CREATE A TSV OR CSV FILE

If you have a standard dump from NA World Services, and will use the standard format codes:

The simplest way to import data is to get a dump of your database from NAWS, and simply rename the CSV file they send you to “meetings.csv”. Place this into the same directory as the main_server, and the two scripts, unaltered from the repository:

Just leave these there, and you will call the import script via HTTP, like so:

Assume that the URI to your main server is http://example.com/root-server/main_server/, the import script will be http://example.com/root-server/bmlt_import.php

Go directly to Step Five. No need for all this frou-frou.

Otherwise:

No matter what the initial form is, we need to convert it to a simple text file before executing our import process. Most databases have the ability to directly export data into CSV/TSV form, but it could also require some data entry and grooming.

The forms that we want are either CSV (Comma-Separated Values) or TSV (Tab-Separated Values).

These are common data formats. Programs like Microsoft Excel will usually emit both, but some programs may prefer one to the other (Excel prefers CSV).

Both formats assign the top (first) line to be a “header” (or “key”) line. This line assigns the column names that we need to import the data. Each subsequent line represents one single meeting.

The CSV/TSV file needs to be a standard text file (Use the “tsv” extension for tab-separated, or “csv” for comma-separated). The file text should be in UTF-8 (NOT UTF-8 BOM).

The line endings should be UNIX-style (Line Feed Only).

To Quote or Not to Quote

You may enclose values between delimiters in double-quotes (“). For example:

"Value 1","Value,2",Value 3,"Value \"4\""

Note that “Value,2” has a comma in it. Since this is a CSV line, the quotes are required, so the parser doesn’t get confused by the comma. In a TSV file, the quotes would not be necessary (but they are often provided anyway).

Note that ‘Value “4”‘ has quotes as part of the value. These are escaped (preceded by a backslash -\). It’s a good idea to escape quotes.

“Value 3” is not quoted. There is no need. In fact, the only one of these values that would require quotes would be “Value,2”, because of the comma. If this were a TSV file, then even that would not be necessary.

In any case, it doesn’t hurt to quote every value, and you should consider doing that.

However you need to do it, you should get your meeting data into a CSV or TSV file. Once this is done, we can move on to the next step

The above line may look mixed up, but it is perfectly legal for our purposes. The below line would also be legal (“->” represents a tab character):

Value 1->Value,2->Value 3->Value \"4\"

With CSV and TSV, you have to align every column. That means that a meeting that does not have a value for a particular column (for example, a “borough” field for a rural meeting would probably be nonexistent, but would be required for an urban meeting), would have a blank (“”) value for that column.

Examples

We have 2 example files in our source code repository:

These files represent the same 24 meetings (in 12 different Service bodies) in the Show Me Region (Midwest).

Formats

We describe formats as sets of format codes, in a list, separated by commas (so that means they usually need to be surrounded by double-quotes in a CSV file).

For example, look at these sections of some rows of the

file:

"location_municipality","location_province","location_postalcode_1","formats" · · · "Columbia","MO","65203","C,L,WC" "Sedalia","MO","65301","O,T" "Kirksville","MO","63501","O,T,WC" "Moberly","MO","65270","C"

STEP FOUR: SET UP OUR KEY MAPS

The file that you will now edit will be

. You will change some values to map your columns and formats to the ones used by the BMLT.

This file needs to be in the same directory as the ”

” script.

The File Name

The first thing we’ll set up will be the filename.

There is a good chance that you will stick with the default, which is ”

” (tab-separated), in the same directory as the executing ”

” script:

function bmlt_get_filename()

{

return 'meetings.tsv';

}

You could, if you wanted, move the script into a different directory:

function bmlt_get_filename()

{

return '../../meetings.tsv';

}

You need to remember that this is not an absolute POSIX path. It is a relative path from the directory containing the ”

” file. For example, if the executing file is in the ”

” directory, the above specification would place the input file in ”

” directory.

The Root Server Directory

You can choose to have the script executing in a separate directory from the Root Server. The default is that the script runs from the same directory as ”

“. Not in the ”

” directory; just outside of it. You can change this:

function bmlt_get_root_dir()

{

return ../public_html/bmlt/main_servr;

}

If we were in the ”

” directory, this would point to the above Root Server directory.

Again, any path that you place there will be relative to the directory in which the ”

” script is executing.

Meeting Fields

The next one is important. This is where you map the columns in your CSV/TSV file to the available database slots in the BMLT server.

We use the fields available, as mapped from the imported ones, like so:

function bmlt_get_field_conversion_table()

{

$conversion_table = array (

'service_body' => 'service_body_bigint',

'location_municipality' => 'location_municipality',

'location_sub_province' => 'location_sub_province',

'location_province' => array ( 'location_province' => 'MO' ),

'meeting_name' => array ( 'meeting_name' => 'NA Meeting' ),

'weekday' => 'weekday_tinyint',

'start_time' => 'start_time',

'duration' => array ( 'duration' => '1:00:00' ),

'formats' => array ( 'formats' => 'C' ),

'comments' => 'comments',

'location_info' => 'location_info',

'location_name' => 'location_text',

'location_street' => 'location_street',

'location_postalcode_1' => 'location_postalcode_1',

'longitude' => 'longitude',

'latitude' => 'latitude'

);

return $conversion_table;

}

The way this works, is that the strings on the left side correspond to the column names in the TSV/CSV file, and the strings on the right correspond to the available slots in the BMLT meeting record.

If you want to provide a default value (applied if there is no value in the TSV/CSV file), then you specify the right side as an associative array of just one element, with the key being the BMLT key name, and the value being the default.

Remember that this is a PHP associative array, so don’t forget the “=>” and the comma at the end of the line.

Format Codes

We do the same with format codes. You use the format keys, and the ones we use on the right side are the keys in the default server language of the Root Server (You can have different format keys in different languages).

function bmlt_get_format_conversion_table()

{

$conversion_formats_table = array (

'O' => 'O',

'C' => 'C',

'L' => 'BK',

'S' => 'St',

'T' => 'To',

'CL' => 'CL',

'SM' => 'Sm',

'SP' => 'So',

'W' => 'W',

'M' => 'M',

'WC' => 'WC'

);

return $conversion_formats_table;

}

One difference is that you do not specify an array for a default value. You can specify one for the entire ”

” field, but not one for an individual format (it doesn’t make sense).

STEP FIVE: RUN THE IMPORTER

Now that the script is ready to go, you make sure that the ”

” file is accessible in the HTTP section of the server (it is a PHP file, so it must be called through the server). You will call the ”

” file to run the import. You can override the settings in the ”

” file, by supplying command arguments:

-

root_dir

specifies a relative (to the script) POSIX path to the root server.

-

filename

specifies a relative POSIX path to the input file.

The conversion is likely to take some time; depending on the size of the import, and whether or not longitude/latitude are supplied (if not, then the script needs to look them up, and that can take a while).

If all goes well, you will receive a report like the ones linked above.

Note that geocoding errors are flagged. If the geocoder indicated it had an ambiguous result (could be nothing, could be on a different continent), then it will have an orange bar. If it outright failed, the bar will be red.

Don’t expect to get it right the first time. This is a non-trivial process. Feel free to use the small example we provide with the scripts to practice.

You will almost certainly be using your database backup a lot.

STEP SIX: REMOVE THE SCRIPT

Never forget to remove the script. It is very dangerous, and can be arbitrarily run by anyone, which could damage your database.

- The Root Server

- Setting Up A New Root Server

- Importing Data From Existing Meeting Lists

- Updating A BMLT Root Server

- Using the New BMLT Server

- Additional Configuration Parameters